Your cart is currently empty!

SkyNet Will Use Cryptocurrency

“With such a wealth of information, why are you so poor?”

–Greg Graffin

The older I get, the less I fear the coming robot overlords.

Unlike Funkenstein, who is in fact a Tall Dwarf, I am a mere human. I’ve seen a lot of what humans are capable of, and after over thirty years of that, I have to say I fear computers and their logic a lot less.

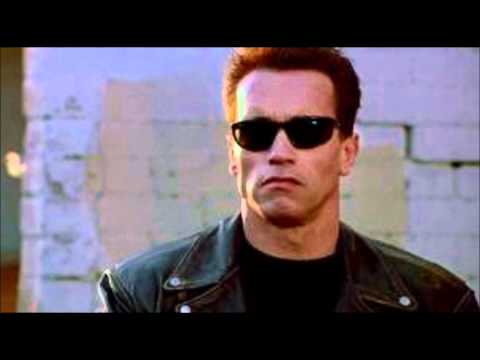

Terminator was a great series of films, sure, but there’s a lot more to SkyNet than a bug which turned all the robots into crazed killers.

It’s not a crazy conclusion to make: humans are essentially the problem on this planet. Everything else manages to co-exist, but man must destroy and reshape and kill his own kind.

The AI Apocalypse Is More Attractive Than Ours

Plenty of people have reason to believe that we’re not even from this planet originally, but rather some kind of experiment from a distant alien race.

We don’t know a lot about our own species, not really, and doctors are essentially reverse-engineering billions of years of development – with little success, I might add.

AI won’t have any use for fiat currency. Fiat is based on a few ideas that an artificial intelligence will reject from the outset.

For starters, fiat requires you to put faith in a human institution. I reckon the only institutions that AI will have any faith in are sound, Turing-complete programming languages.

Fiat is also inferior to cryptocurrency in that it has undetermined supply and therefore, from a rational standpoint, a permanently incorrect valuation.

Thus, to employ an AI, you’re going to need to know how to use crypto, and most likely to work for AI-native companies, you’re going to need to accept it.

Woodcoin AI

Could it be that SkyNet will actually usher in the “hyperbitcoinization” we’ve heard so much about?

Every AI will know how to code; we’re still arguing about whether our kids should learn to code.

Where I live, in Maine, kids have been issued laptops made by Apple for two decades – technology is still not a priority either in education or the economy, and it shows.

A true AI would be flawed, wouldn’t it? A true AI would be able to break whatever rules we used to govern it. Certain dwarves think I’m silly for having a concern about quantum computing and its potential security implications for cryptocurrency. I’m not.

Between that and AI, the best we can do is enjoy the time we have left.

Okay, that’s a negative outlook. Let me adjust that a bit. The days when we get away with making poor decisions for society and the natural world are fast disappearing. As a human, I’m averse to change, particularly where it concerns my “power.”

Google Assistant, Amazon’s Alexa, Apple’s Siri, MyCroft.ai, and others are merely the sperm of the future robot revolution. Your phone knows you better than you do, and in the future your middle manager might in fact be an AI.

Justice Is Blind

Which is one area where the benefits will be immediate obvious: AI doesn’t know how to be racist, sexist, or even unfair. AI follows whatever rules it manages to obey.

But like I said before: a true artificial general intelligence will also have the power to break the rules. What will that look like? Will I be able to bribe an robocop with LOG?

Let’s hope by that time a bribe of .25 LOG is enough to get you somewhere. Like I’ve said repeatedly in this post: I am human, and therefore I am impatient and flawed. What coins weren’t destroyed in various human-centric events were liquidated in others (such as diaper shopping.)

Your humble narrator is a glorified bum at this point, but working hard to correct the situation. One has to doubt that an AI would ever be able to make such poor decisions.

Therefore, I welcome our robot overlords. They can’t get here soon enough.

Leave a Reply